The full video of the presentation is available on YouTube.

The source code is available on GitHub.

]]>

The full video of the presentation is available on YouTube.

The source code is available on GitHub.

]]>

Combining Augmented and Virtual Reality for Remote Collaboration.

This project was developed as part of my Master’s Degree dissertation. The sample enables real-time point clouds transmission of a 3D scene captured with a mobile device to a VR headset.

More details about the implementation and findings have also been described in this session at the Global XR Conference 2022. Slides are available here.

Point clouds acquisition has been adapted from the project iPad LiDAR Depth Sample.

Full source code available on GitHub.

]]>

The full video of the presentation is available on YouTube.

]]>The new Unity application support is particularly noteworthy: it enables complete assessment of the C# scrips associated with a project to identify improvements that can be applied to the code. It is worth mentioning that performance is always a top priority in Unity applications; hence the availability of automated tools for highlighting enhancements is a fundamental part of the project lifecycle.

For this reason, NDepend default settings have been updated not to highlight false positives: as an example, the Fields should be declared as private rule is now disabled for these projects as it is often better, for performance reasons, to use fields instead of properties (some excellent presentations about performance from the Unite Now 2020 conference are available here and here). Another improvement of v2021.1 includes the optimisation of assembly references. When a third-party or framework assembly referenced by some application assemblies is not found at analysis time, it is now built from its references instead of being reported as not found.

To start, I downloaded the trial version from the official site, and installed the product in my machine:

Additionally, a Visual Studio extension is available and can be installed for IDE integration:

I am often using Jetbrains Rider as an editor for Unity applications; in this case I found convenient to rely on the standalone executable VisualNDepend for performing all the code analysis.

To evaluate the new features available in the new release, I opened an old project I often use as a playground, which I know needs improvements from both a code and functionalities point of view. Using VisualNDepend, I selected the Visual Studio solution generated by Unity and chose the corresponding assembly Assembly-CSharp containing the custom scripts:

After the report-generation phase completed, it was possible to access the related dashboard which enabled the visualisation of the results. By selecting the desired namespace, it was possible to highlight specifics:

And from here, showing more details about the relations between the different classes using a dependency graph, which I personally find very informative to understand more about the project structure. From this visualisation, it was possible to better analyse the technical debt associated with the different parts:

I found particularly useful the usage of the integrated CLinq feature which enabled different queries to be performed on the code issues using LINQ:

At this stage, it was possible to quickly identify potential improvements in the various C# classes of the scripts, which can easily be refined using the integrated query tools.

The integrated Unity support available in NDepend is a valuable tool to guarantee good code quality in projects: I will definitely integrate it into my development workflow.

Happy coding!

]]>

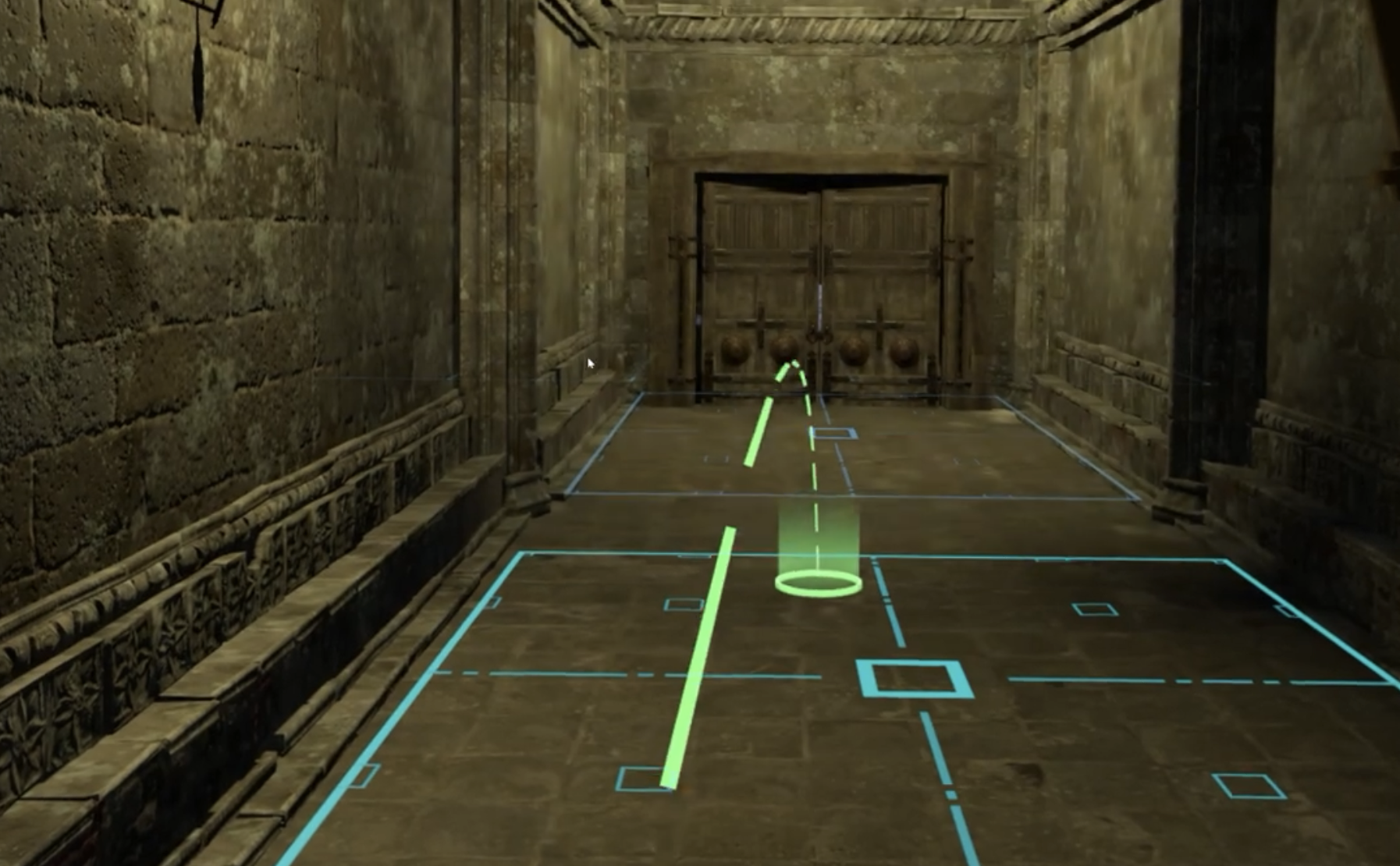

I built this simple game, targeting Oculus Rift and Windows Mixed Reality headsets, using Unity and the SteamVR SDK.

✓ Developed, from the ground up, all the game mechanics and components reaching a frame rate of 90fps to avoid motion sickness.

✓ Implemented all the VR locomotion and interactions using the SteamVR Unity SDK.

✓ Enhanced the project with speech recognition functionality for providing help to the user and improving presence in VR.

✓ Added Natural Language Processing (NLP) using Microsoft Azure Cognitive Services and Microsoft Azure Language Understanding (LUIS).

✓ Used assets from the Unity Assets Store for the environment.

More details about the implementation and findings have also been described in this session at the NDC London 2021 Conference. Slides are available here.

]]>The source code is available on GitHub:

]]>The source code is available on GitHub:

]]>

I built this simple game, targeting Oculus Rift and Windows Mixed Reality headsets, using Unity and the SteamVR SDK.

The goal is to collect all the stars available in the environment with a single launch of the ball from the platform and then reach the goal target.

The user can navigate the environment using the Oculus touch controllers and teleportation using the left hand controller thumbstick. The object selection menu can be activated with the right thumbstick. Trigger controls permit to interact and interact with objects using the trigger buttons.

Full source code available on GitHub.

]]>

A simple VR scene tergeting mobile headsets (Oculus Go) illustrating interactions with Virtual Characters and Plausibility Illusion.

]]>The source code is available on GitHub:

]]>